The Autonomy Illusion: How Human Judgment Is Emerging as AI’s New Scale Factor

Published on 13 Apr, 2026

AI may automate execution, but human judgment is emerging as the real scale factor behind safe enterprise deployment. In high-stakes environments, scalable trust will depend on how effectively organizations institutionalize human oversight.

Early enterprise deployments suggest the “Do It For Me” promise of AI is encountering structural limits in high-stakes environments. Instead of replacing expert judgment, AI is reshaping how it is applied. A new operating model is emerging: human judgment is becoming the infrastructure that allows AI to scale safely.

EXECUTIVE SUMMARY

Enterprise AI adoption is accelerating—but its path is diverging from early autonomy-first expectations. In regulated, safety-critical and reputationally sensitive environments, limits are set less by model capability and more by governance, liability, and trust. As a result, leading organizations are redesigning AI workflows to hard-wire human judgment at decisive moments. This is driving the emergence of Judgment-as-a-Service: expert validation delivered as a scalable, on-demand layer within enterprise AI systems.

THE THREE STAGES OF ADOPTION

Enterprise leaders increasingly map AI maturity across three distinct phases. While the market pushes toward Stage 3, the reality of deployment is revealing unanticipated friction.

| Stage | Paradigm | Role of AI | Operational Reality |

|---|---|---|---|

| 01 | Advise Me | Suggestive | User prompts: AI generates drafts. Low risk, humans initiate all action. |

| 02 | Do It With Me | Collaborative | AI functions as a co-pilot. Joint execution with constant human oversight. |

| 03 | Do It For Me | Autonomous | Current Friction Point: Fully independent execution fails governance standards in regulated environments. |

Progress toward autonomy continues in low-risk tasks. However, in domains where accountability is non-transferable, adoption increasingly stalls.

WHAT IS CHANGING INSIDE ENTERPRISE AI DEPLOYMENTS

While it’s possible to plug AI agents into existing workflows, reaping the full potential of the technology calls for reimagining those workflows from the ground up, with agents at the core.

Work will need to be redesigned around collaboration between humans and agents. But agents should not sit at the center of every workflow; they still lack many human capabilities and require oversight, among other limitations. For all their potency, AI agents don’t have all the answers.

Organizations need to maintain critical human skills, including emotional intelligence combined with technical skills across early large-scale deployments, a directionally consistent pattern is emerging experts are shifting from producing outputs to certifying them.

Healthcare

Clinicians validate AI-assisted diagnostics before final clinical decisions.

Legal & Compliance

Attorneys review AI-generated analyses for jurisdictional and regulatory defensibility.

Financial Risk

Senior officers certify forecasts and stress-test outputs prior to submission.

Cybersecurity

Specialists assess model behavior as potentially untrusted system outputs.

Public Policy

Domain experts review AI recommendations to ensure statutory alignment.

Manufacturing

Engineer and plant leaders validate maintenance and shutdown recommendations before execution.

Human oversight is no longer an exception. It is becoming a core design layer in enterprise AI systems

WHY IS THIS TREND ACCELERATING?

Four structural forces appear to be converging, pointing toward human-in-the-loop becoming a durable feature of enterprise AI architecture.

| Structural Driver | Business Implication |

|---|---|

| Governance Lag | Human validation is increasingly viewed as the fastest path to safe deployment |

| Rising Exposure | Risk ownership shifts from models to decision frameworks |

| Model Opacity | Independent review layers become mandatory, not optional |

| Interface Maturity | Expert judgment scales without requiring deep technical expertise |

Together these forces shift AI architecture from pure automation to managed decision systems.

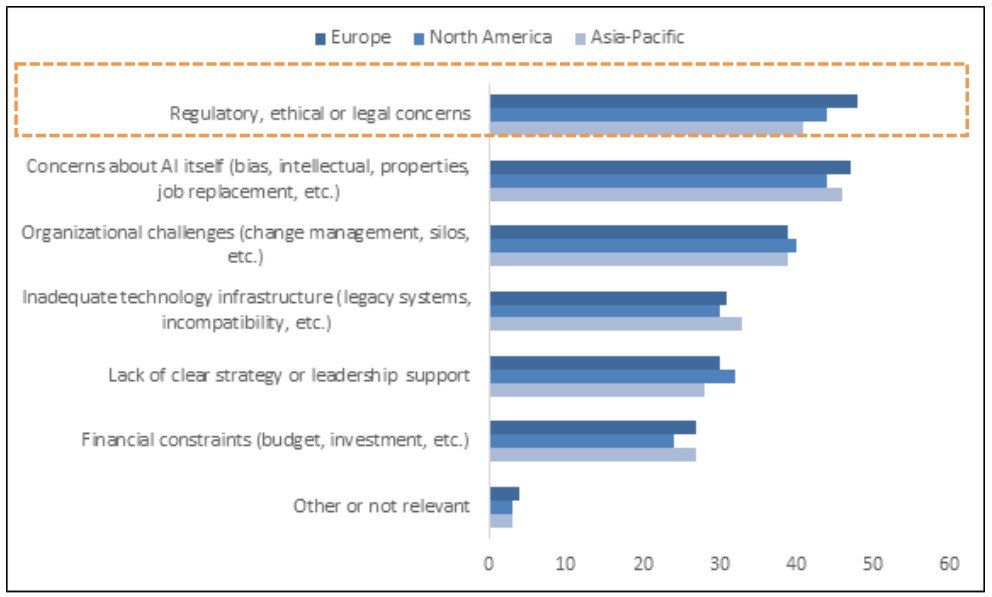

Survey respondents cited concerns ethical and regulatory concerns as top barriers to adopting AI.

Top barriers preventing organization from adopting AI on a scale, % of respondents (n = 3,763)

Source: McKinsey & Company: The state of organizations 2026 report

THREE STRATEGIC IMPERATIVES

For leadership, the "Do It for Me" vision must be reframed from technical autonomy to managed accountability.

Acknowledge the "Autonomy Gap"

In high-stakes environments, unvalidated AI output creates unacceptable risk exposure. AI systems must therefore be designed with the assumption that outputs are probabilistic recommendations, not authoritative decisions.

Redefine Talent Acquisition

Validation expertise is difficult to scale through traditional hiring. Organizations increasingly require on-demand networks of credentialed experts — clinicians, engineers, attorneys accessible at critical decision nodes.

Institutionalizing Governance

Validation protocols increasingly need to be auditable. "Human-in-the-loop" is not a slogan; it requires defined escalation paths, sign-off authority, and measurable quality SLAs.

Most organizations lack the capacity to maintain large pools of credentialed experts solely for intermittent validation tasks. At the same time, governance requirements increasingly demand auditable validation layers embedded directly into AI workflows.

As a result, what is emerging is not merely a new operational practice, but the early formation of a services ecosystem designed to provide scalable human judgment alongside AI systems.

FOUR MARKET OPPORTUNITIES

A new services landscape is forming at the intersection of AI scale and human trust.

Validated AI Services

Third-party certification layers for high-stakes industries (e.g., "Audited AI Diagnostics").

Expert Marketplaces

Platforms connecting enterprises with credentialed validators for micro-task engagements.

Validation Standards

Defining the ISO-equivalent benchmarks for AI output quality and safety

Governance Playbooks

Operational frameworks for embedding human sign-off into automated workflows.

CONCLUSION

Early enterprise experience is challenging the assumption that AI will eliminate the need for human expertise.

Automation does not remove judgment; instead, it concentrates its value; it concentrates its value.

As AI systems scale, the primary differentiator is beginning to shift from compute power to the quality of the human judgment verifying that compute.

Over the next 24–36 months, the organizations that scale AI successfully are likely to be those that treat human judgment not as friction, but as infrastructure.

Does your organization have a systematic mechanism for judgment? If not, you are not accumulating innovation, you are accumulating risk.